Table of Contents

- Key Points

- Introduction to Local Machine Learning Systems

- Advanced Tips for Enhancing Your ML System

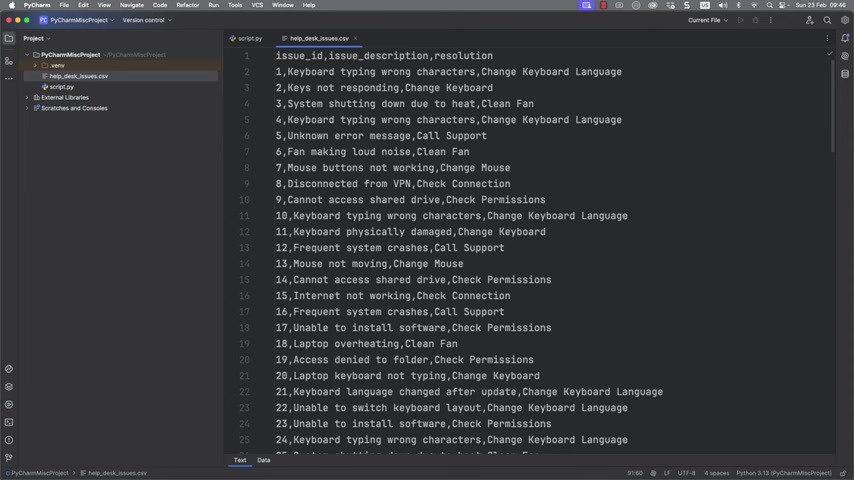

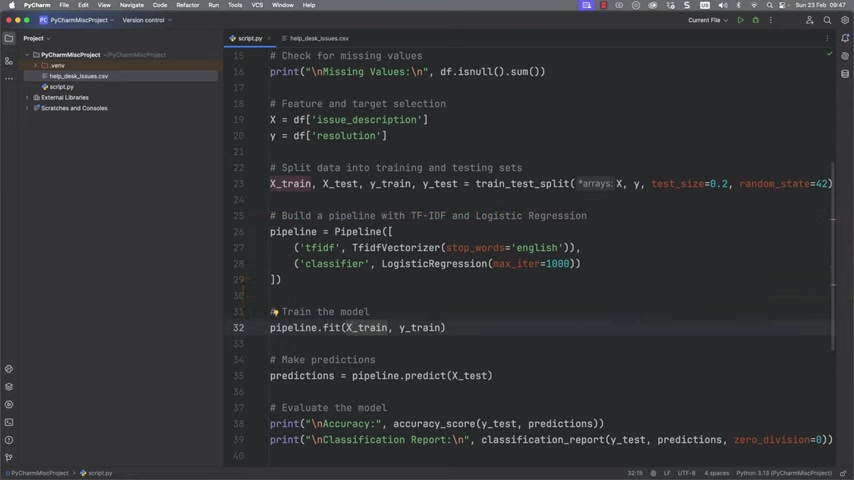

- Step-by-Step Guide to Building a Local Python ML System

- Cost Analysis: The Benefits of a Local ML System

- Advantages and Disadvantages of a Local ML System

- Frequently Asked Questions

- Related Questions

Most people like

Report

Please select a reason for reporting