Table of Contents

- Key Points

- Building a Convolutional Neural Network for Image Recognition

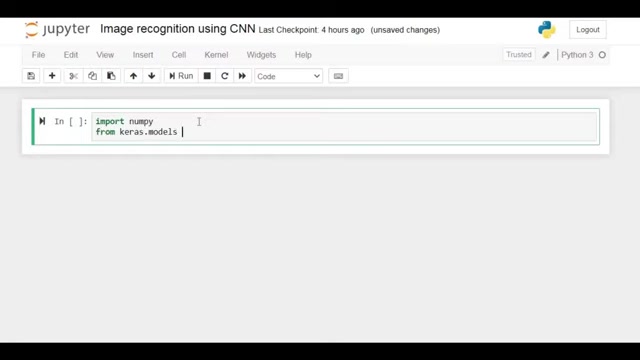

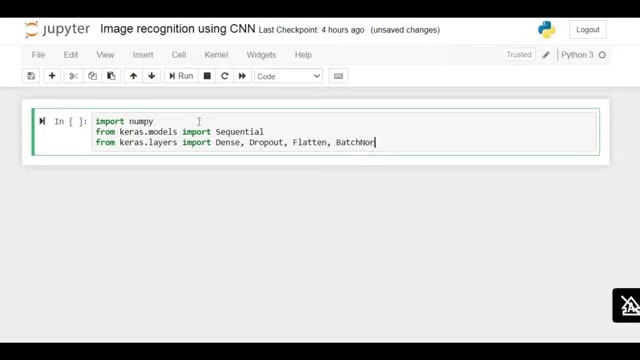

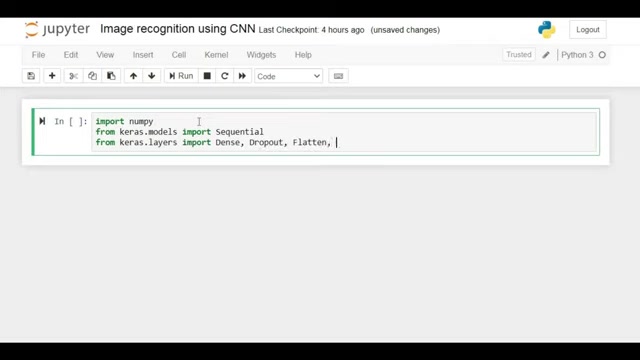

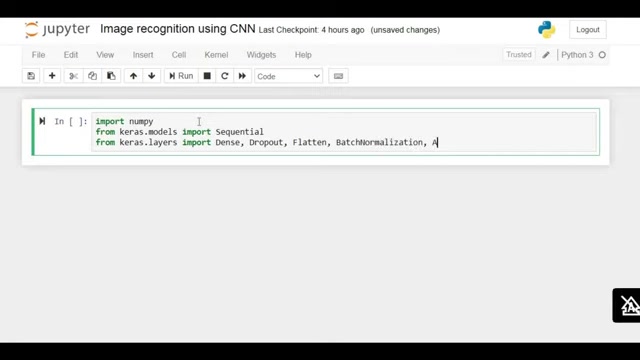

- Step-by-Step Guide to Implementing Image Recognition

- Tools and Resources Pricing

- Advantages and Disadvantages of Using CNNs for Image Recognition

- Key Benefits of CNN for Image Recognition

- Applications of Image Recognition with CNN

- Frequently Asked Questions (FAQ)

- Related Questions

Most people like

Report

Please select a reason for reporting