Streamlining Computer Vision Workflows with DataOps

AD

Table of Contents

- Introduction

- What is Data Ops?

- Reasons for the Rise of Data Ops

- Key Principles of Data Ops

- The Modern Computation Stack

- Challenges in Data Curation

- Automation and Orchestration of Data Flows

- Ensuring Data Quality in the Data Lifecycle

- Monitoring Quality and Performance Metrics

- The Importance of Collaboration among Data Stakeholders

- The Future of the Modern Computation Stack

- Startup Opportunities in the ML Infrastructure Ecosystem

Highlights

- Introduction to Data Ops

- The reasons for the rise of Data Ops

- Key principles and best practices of Data Ops

- Overview of the modern computation stack

- Challenges in data curation

- Automation and orchestration of data flows

- Importance of data quality and performance monitoring

- Collaboration among data stakeholders

- The future of the modern computation stack

- Startup opportunities in the ML infrastructure ecosystem

Data Ops: Transforming the Modern Computation Stack

By James Day

Data Ops has emerged as a crucial discipline in the field of computer vision and machine learning. In this article, we will explore the key principles and best practices of Data Ops, as well as its impact on the modern computation stack. We will also discuss the challenges faced in data curation, the importance of automation and orchestration of data flows, and the need for collaboration among data stakeholders. Finally, we will examine the future of the modern computation stack and explore the exciting startup opportunities in the ML infrastructure ecosystem.

Introduction

In today's data-driven world, the ability to efficiently process and analyze large volumes of data is crucial for businesses to stay competitive. This is where Data Ops comes into play. Data Ops is a term borrowed from the field of DevOps, which focuses on the transformation and delivery capabilities of analytical teams. Similar to how DevOps has revolutionized software development, Data Ops is changing the way data is handled and processed in the modern computation stack.

What is Data Ops?

Data Ops can be defined as a practice that brings together different data teams to work collaboratively and efficiently in order to ensure data quality, engineering, security, and obtain valuable insights from data. It encompasses various best practices and principles borrowed from software engineering and DevOps, such as version control, automation, testing, monitoring, and collaboration.

Reasons for the Rise of Data Ops

There are three main reasons why Data Ops has gained significant importance in the field of computer vision and machine learning. First, the massive volume of complex data generated from various sources requires efficient tools and technologies to handle and process it effectively. Second, the technology overload, with numerous tools available for data transformation, cleaning, and analysis, necessitates a streamlined approach to handle and choose the right tools. Finally, the diverse roles and mandates of the people working with data, such as data engineers, data scientists, and business managers, Create collaboration overload and friction between teams, highlighting the need for a better framework to ensure productive teamwork.

Key Principles of Data Ops

To implement Data Ops successfully, there are several key principles and best practices to follow. These include:

1. Implementing best practices for development: Borrowing software engineering best practices such as version control, code reviews, testing, and infrastructure as code to ensure high-quality and reliable code in data pipelines and workflows.

2. Automating and orchestrating data flows: Building CI/CD pipelines and using tools like Apache Airflow or Prefect to automate data flows, including tasks such as backfilling, scheduling, and gathering pipeline metrics.

3. Testing data quality at different stages: Implementing testing frameworks to ensure data quality at the source, during data preparation, and after transformations. This includes schema tests, SQL tests, and streaming tests.

4. Monitoring quality and performance metrics: Defining and monitoring technical, functional, and performance metrics across data flows using tools that capture and Visualize these metrics, allowing Meaningful actions to be taken.

5. Being a common data emitter and data model: Establishing a common data model and data catalog to enable collaboration and understanding between different teams and stakeholders across the organization.

6. Empowering collaboration among data stakeholders: Creating cross-functional teams with no division between key functions, focusing on shared objectives, and enabling self-service access to data.

The Modern Computation Stack

The modern computation stack is a collection of tools and technologies centered around cloud data warehouses. It aims to provide ease of use, scalability, automation, and cost efficiency. The stack includes infrastructure ops, data planning, metadata, development, and data products and services layers. Various companies are providing solutions in each of these areas, catering to the growing needs of the data ops landscape.

Challenges in Data Curation

Data curation, the process of discovering, organizing, and sampling data for specific analytics or operational tasks, poses significant challenges in the modern computation stack. These challenges include the need for efficient tools and technologies to understand and curate large and diverse datasets, the time-consuming nature of the manual process, and the difficulty in keeping up with the ever-evolving data ecosystem.

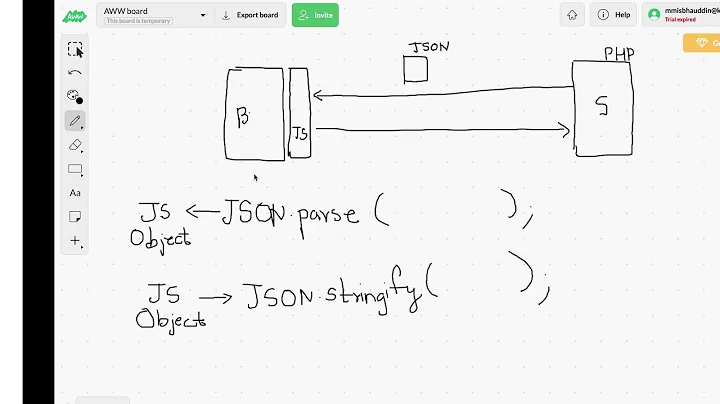

Automation and Orchestration of Data Flows

To overcome the challenges of data curation, automation and orchestration of data flows are crucial. This involves building CI/CD pipelines, using orchestrators like Apache Airflow or Prefect, and automating data testing and validation tasks. By automating these processes, data teams can achieve greater efficiency and scalability in their workflows.

Ensuring Data Quality in the Data Lifecycle

Data quality is a critical aspect of data ops. Data must be tested and validated at various stages of the data lifecycle to ensure accuracy and integrity. This includes testing data at the source, validating it during transformations, and monitoring data quality and performance metrics. Tools such as Great Expectations provide a framework for implementing data quality testing practices.

Monitoring Quality and Performance Metrics

Monitoring quality and performance metrics across data flows is essential to detect and address any issues promptly. Defining and visualizing metrics, including technical, functional, and performance metrics, helps identify potential bottlenecks and allows meaningful actions to be taken. Companies like Monte Carlo and Rise AI provide solutions for monitoring data quality and model observability.

The Importance of Collaboration among Data Stakeholders

Collaboration and teamwork among data stakeholders are crucial for successful data ops. The key is to build cross-functional teams that include data labeling managers, data engineers, data curators, and data ops leaders. These teams work together to ensure efficient data labeling, data transformation, data curation, and problem-solving. Tools that facilitate collaboration and knowledge sharing, such as data catalogs and shared metadata repositories, are essential for productive teamwork.

The Future of the Modern Computation Stack

The modern computation stack is continuously evolving as new technologies and approaches emerge. One such trend is the concept of the Machine LearningOps (MLOps) stack, which focuses on the automation, monitoring, debugging, and governance of machine learning models. As the field of machine learning continues to advance, the modern computation stack will need to adapt to incorporate Novel techniques such as large language models and advanced production monitoring tools.

Startup Opportunities in the ML Infrastructure Ecosystem

The rise of data ops and the modern computation stack has opened up exciting opportunities for startups in the ML infrastructure ecosystem. These opportunities include tooling for data engineering, data tracking, feature stores, automated data augmentation, and no-code ML platforms. As the demand for ML infrastructure increases, there is immense potential for entrepreneurs to build innovative solutions that address the pain points and challenges faced by data teams.

FAQ

Q: What is Data Ops?

A: Data Ops is a practice that focuses on the delivery capabilities of analytical teams, including data quality, engineering, and security, to obtain valuable insights from data.

Q: Why is Data Ops important?

A: Data Ops is important because it enables efficient handling and processing of complex data, ensures collaboration and productivity among data teams, and facilitates the extraction of meaningful insights from data.

Q: How does Data Ops relate to DevOps?

A: Data Ops borrows principles and best practices from DevOps, applying them specifically to the data workflows and processes used in analytical teams.

Q: What are some challenges in data curation?

A: Challenges in data curation include the need for efficient tools to handle large and diverse datasets, the labor-intensive process of manual labeling and curation, and the difficulty of keeping up with the evolving data ecosystem.

Q: How can automation and orchestration improve data flows?

A: Automation and orchestration of data flows can improve efficiency and scalability by automating tasks such as data testing, transformation, and validation, and ensuring smooth data integration and delivery across workflows.

Q: How can data quality be ensured in the data lifecycle?

A: Data quality can be ensured through testing and validation at various stages of the data lifecycle, using tools and techniques such as schema tests, SQL tests, and monitoring of technical, functional, and performance metrics.

Q: What is the future of the modern computation stack?

A: The future of the modern computation stack involves incorporating novel techniques and technologies such as MLOps, incorporating large language models, and advanced production monitoring tools.

Q: What startup opportunities are there in the ML infrastructure ecosystem?

A: There are many startup opportunities in the ML infrastructure ecosystem, including tools for data engineering, data tracking, feature stores, automated data augmentation, and no-code ML platforms, among others.